Artificial Intelligence survival guide

After lurking in our imagination for centuries, Artificial Intelligence is rapidly making the jump from postgraduate research papers and sci fi flicks, to actual commercial products that should replace the way in which we interact with digital machines.

The implications of this fact will be numerous and drastic, also carrying the fears and anxiety that automation usually implies: Jobs becoming obsolete, industries becoming less human-centered, working ethics becoming more, so to say, problematic.

Interestingly, one of the first AI applications that became obvious, efficient and even profitable without risking a huge ethical dilemma, is the field of text-to-image. A way to translate descriptive words to synthetic images, or paraphrasing some marketer’s label: “…of expanding the limits of human imaginative powers”.

What everyone is calling AI Art nowadays, has been for years on the edges of the creative industries. My first memory of a google dream video is more than six or seven years old. But the recent trend brought by new services offered to a wider number of users with simplified access possibilities, just placed this subject in the very center of the creative digital world. From that moment on, comments of awe, excitement but also pessimism have flooded the social networks.

I decided to experiment my way into this trend before taking any negative or anxious positions about the whole thing. Looked for access to a couple of the most used platforms, played with the one that I found more compelling, and generated images until my quota was finished. Here I try to point out a couple of thoughts that might be useful to embrace this very powerful tool and play along with our fears.

1. This is a commercial concept that feeds on human desire

Let’s not forget that.

We are going this way because this idea has been implanted in our minds since the very beginning of our history. Examples are countless along history: papyrus incantations which allegedly made statues move in the 2nd Century A.C.[1] Chapter 2, a mechanical chess-playing Turk in the 18th century[2], to the voice-based assistants which you can find in your phone or TV nowadays. AI defines the pinnacle of the human desire to “instill life and understanding” into inert matter. And now, thanks to faster electronic components and intense computing research, this desire has found a way to become a more or less real possibility. Plus its name makes a promise that attracts lots of attention, and money.

Simultaneously, by being such an inherent human affair, the field of AI is highly prone to political and business narratives as well as societal constraints that might affect the potential and the scope of the tools it is deploying. This means, that advanced tools like this, usually follow the agenda of their creators and their context and will by no means be suitable for all users or intents.

Using an AI-based generation environment is not precisely an easy process yet, but it is getting much better. The easiest to use and more interesting for my taste was perhaps Midjourney, so I will briefly describe its inner workings both for people that haven’t used it, and in the hope of supporting my argument.

Midjourney is divided into a web interface where you can find your work, info and certain features, but the whole image generation action happens after connecting to Discord. The known streaming and communication platform offers an expanded chat environment in which one can interact with other users or with the AI that is, in form of a Bot, constantly listening for prompts and returning images back to different public channels or back to you.

They have made a set of community rules that are shaping the use scenarios, and policies that define the range of imagery that can be produced while using their services. For example, certain words have been banned gradually, because of the kind of result they tend to yield. And even more, users that try to circumvent these limits are theoretically denied access.

Furthermore, the developers have defined lists of styles that you can use to guarantee a fancy outcome, prompt phrase generators in case you have no ideas about what to describe, as well as discord channels that “guide” users by forcing words into their prompts and comparing their results with others around subjects like “environments” or “abstract”.

If you’ve seen more than enough Cyberpunk-themed, concept-art-like scenes in your life, prepare, because the wave is just growing higher.

In short: there are lots of things in shaping here. These services are being designed for millions of users and must find a balance between creativity, censorship, content management, authorship and use scenarios. While this is incredibly interesting, because everything happens in Discord and everyone is welcome to join the discussion, it might also lead to fall into certain “directives” that would tend to uniform and conduct the whole platform towards certain dominant aesthetics. This in my opinion, could be more harmful than good.

This brings me to my second point

2. This is just the beginning and humans tend to group around the known and the like-minded.

So there will still be plenty of play room for artists.

The use of these services so far, centers on giving anyone the chance to verbally describe an image via a chat prompt, including certain output parameters and a desired visual treatment. The “intelligent” bot will try to understand these variables and blend them into a balanced image composition according to certain models of human perception it has been trained with. Then, after one minute, it will send you back a message containing four different low resolution images. From these you can start choosing if one, or more, are good enough to produce in a bigger scale, or at least to create four more variations from.

This is absolutely magical, and makes the use of these tools highly addictive and fun. But it’s also dazzling and discouraging if you think of the amount of time that a human artist would need not just to conceive, but to create four different variations of the same idea that this thing brought to you within 60 seconds.

Yet beyond the speed, the quality of certain results and the enthusiastic welcome on the community, when I scroll through the channels or public galleries, instead of seeing an improvement of the “creativity” as the number of users grow, I perceive something else. As it happens with other social networks, creative clarity, or visual consistency, comes from a few users, and it is relatively seldom to spot who is really exploring the potential of individual prompting.

Prompting offers a pretty interesting and novel technique of bringing ideas to life: It is not difficult to generate a random, good looking picture by typing some basic orders and a style, or by copying someone’s prompt and adding some changes. But it is difficult to achieve what you really envision. And starting from a random seed ( I haven’t found a tool that allows you to feed your own images and mix them with your prompt), will make it harder to art direct your ideas. Learning to send valid parameters along with your prompt is very important , together with lots of experimentation, browsing and a good sense for visual thinking.

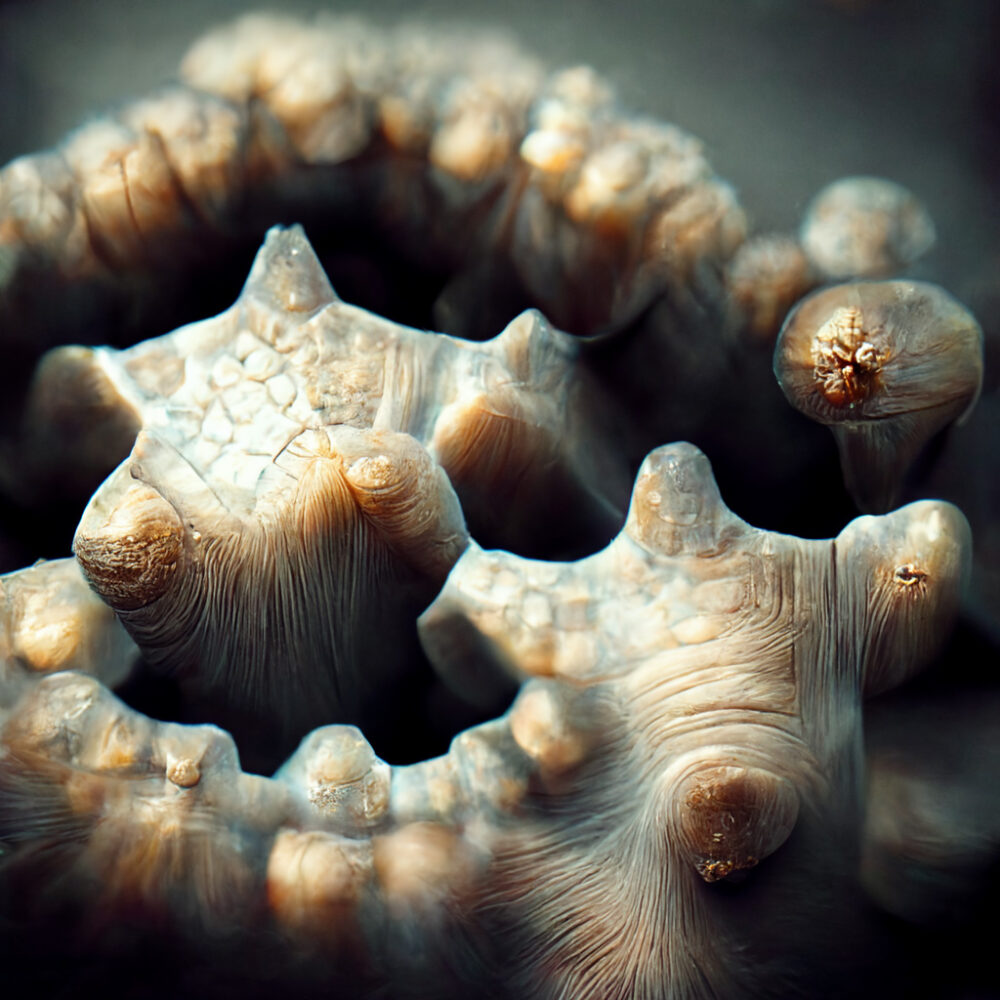

Samples from my own learning path: very stylized, or graphical results on the first prompts. On the last images I could reproduce certain style and structural unity.

This method will get better and will eventually get more options I guess, but: Web trends, on the other hand, tend to define the whereabouts of the community’s content. Users echo one another constantly and many use someone else’s prompts after they get frustrated with their own results. On some channels there’s a lot of really funny, disappointing or grotesque results because of naive prompting, and on the other side, there are lots of very similar, grandiloquent, but way too impersonal, repetitive, images. In my opinion, repetition and overuse of certain aesthetic strands might result in over-saturation, hence creating demand for better artistic judgement, good prompting skills and a strategy with mixed techniques, if there will be use in professional environments.

This capacities can be observed is an experimental bunch that have been concentrating not just in replicating images or styles they like, but on exploring what these bots are good for: transitioning visually between unpaired concepts and morphing things into the unknown while contributing to the general discussion.

Which takes me to the third note.

3. Debunk the myth of creativity.

Neural networks are not really intelligent yet, because they are not conscious, because they are not self reflective or sentient. Or that we have learned.

Lev Manovich defines how we tend to relativize the concept of intelligence every time a machine achieves something It shouldn’t be able to do [3]. This is crucial to understand what happens nowadays: It was difficult to accept the victory of a computer over a Go Master in 2016 (heard about the story of AlphaGo?), but accepting that machines can overcome one of the key human abilities such as artistic creation, is another story.

We very much like to believe that we are the definition of intelligence and can program machines to efficiently recreate human behaviors that can be mechanically reproduced. Paradoxically, every time a new quest is mastered by a machine, we tend to conclude that the task was more mechanical than we thought, and it can be programmable and predictable. Basically, after a machine passes a test towards “being” human, we just opt for dehumanizing that particular behavior.

Manovich reminds us of Ludwig Wittgenstein’s approach to this matter, in the form of a simple question: might not be the case, that instead of testing which human behaviors can be mechanized and reproduced, we are really proving how human behavior is mechanical by nature?

It is a completely different thing. Quoting directly: “…creativity might be overhauled as a human faculty simply because we do not understand its workings”[4].

And on top of that, on the same article, the author confronts us with hard examples of sacred examples of human creativity being crushed by experimental AIs: Unfinished Symphonies of classic composers that have been “completed” by AIs, or Trained Neural Networks capable of writing articles like the one you’re reading, without making readers suspicious about it’s human authorship.

This simple amount of evidence should make us question ourselves: Would we be ready to look at things that way? Would the acceptance of our own programmability and mechanical nature be enough to let go of a bit of anxiety and let the tool be a better tool instead of looking at it as a competitor?

In the end there is an ethical question at the base: Who makes the decisions? As long as complex creative projects need to be produced, human decision making is crucial and will remain crucial. And that’s the most positive thought we can have. As much as we have partaking in the formation of these technologies, artists will have a very good time using these tools to make bigger leaps while producing a project. This technology feels super useful and it will become more and more present in production environments. The way it will be doing it, is still up to us, but we need to get well aware of what is going on around here.

4. Fight for Ethics in the Industry (and in every field)

In opposition, if we take a look at what is going on in the military and in Google for instance, entities that have almost no regulation from the public or the government, the story is already taking dire paths.

In an interview found in Wired, Timnit Gebru, a computer scientist who worked at an AI Ethics team at google, explains how she and others just got out of the loop and eventually dismissed from the corporation for insisting exactly on the relevance of thinking collectively about the scope and power range of AI applications. Slowing down is apparently not an option for the moneymakers. In the interview she also tells us about how creepy companies were offering to use AI in order to predict criminal behavior and use it against people in court. A fact that caused her to go and work as an activist against the negative impact of AI in societies[5].

The concept of negative impact is precisely related to decision making: How much of this power are we willing to give to error prone, biased and under-tested technologies, and how much corporations will try to increase it in the name of efficiency and profit? Is such an attitude possible in one of the existent or emerging image-generation platforms?

Will we support and finance tools that go that way? Will we just pretend we don’t care? It’s hard to talk about ways to be on the discussion line, but as a start, I’d encourage people and creators to take a look by themselves and help to keep a healthy focus on how AI can positively affect the capacities of art-making, instead of becoming an ethical problem and a hated rival for many.

Conclusion

I think our fear should be directed into that direction: Humans that are too greedy or too short sighted, too delirious or too naive to take a breath of the exhilarating tech run and think. Think about what we are doing things for, and what effects will they have in society, economics and in our collective psyche.

So far the teams of every Image prompting project I’ve explored, seems to be legit, open to discussion, inviting and still conservative about profit. Let’s say that we are still seeing a transition from intense research to a feasible product that can be monetized and needs users to grow.

But that might change at any given moment and some of these, or an emergent one. might be tempted to go the Facebook/Google way.

Instead of looking the other way or just thinking about our creative Egos, we need to get literate about this, try to influence the developers and investors with the really useful applications we can foresee, and be always a step ahead before accepting features that might set not just us artists, but whole societies at the mercy of poorly designed, not regulated, pseudo intelligent systems.